Every engineering organization knows the pain of shipping a feature only to watch it break because a crucial requirement lived in someone’s head. We solved that problem decades ago with version control, CI/CD, and infrastructure-as-code. But in 2026, a parallel class of critical artifacts—the instructions, specifications, and contextual knowledge that drive AI agents—remains disturbingly unmanaged. System prompts live in scattered .cursorrules files. Business logic hides in Slack threads. Compliance guardrails exist as tribal knowledge. And when an agent misbehaves in production, nobody can trace which version of which instruction caused the failure.

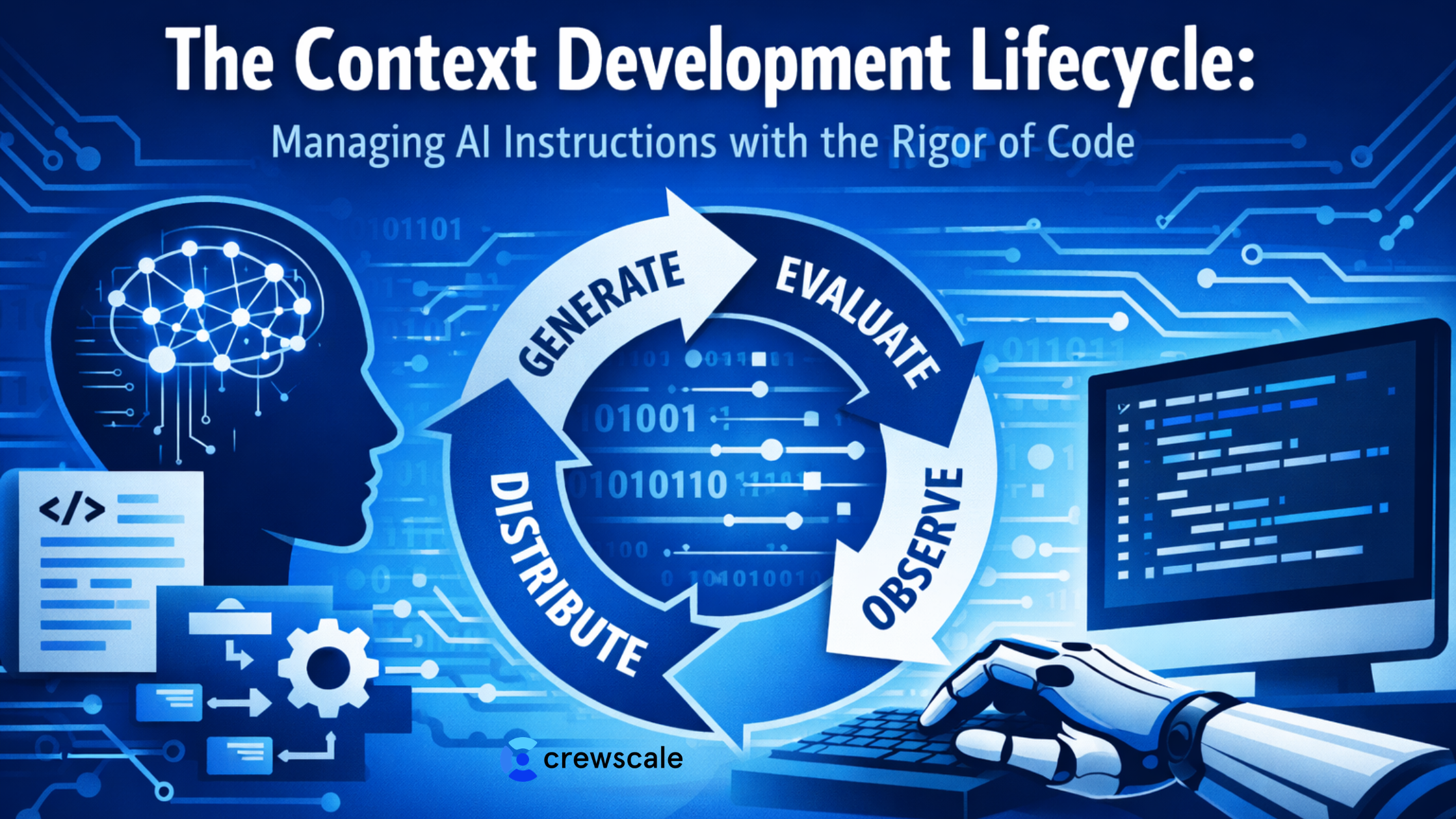

This gap has a name now. Patrick Debois, the engineer who coined “DevOps” in 2009, calls it the context problem. His proposed solution is the Context Development Lifecycle (CDLC): a four-stage framework that brings software engineering discipline to the instructions enterprises feed their AI systems. The thesis is deceptively simple: context is the new bottleneck, not code. The CDLC provides the operational framework to treat context as a versioned, tested, and distributed infrastructure.

Why Context Needs a Lifecycle

If you manage an engineering team deploying LLM-powered features in 2026, you’ve almost certainly encountered “context rot”—the silent degradation that occurs when AI instructions fall out of sync with reality.

- A coding standard changes, but the agent’s system prompt still references the old one.

- A compliance policy is updated, but the retrieval pipeline keeps serving stale documents.

- A tool API evolves, but the agent’s tool definitions remain frozen in time.

The consequences scale with adoption. Research from long-context evaluations has shown that LLM performance can drop below half its baseline as context windows grow past 32,000 tokens, especially when information semantically overlaps or conflicts. In enterprise environments where dozens of agents may share overlapping instruction sets, an untracked change to one prompt can cascade across thousands of interactions. The industry has converged on a clear conclusion: prompts and context artifacts need the same lifecycle management we apply to application code—version control, testing, staged deployment, and continuous monitoring.

The Four Stages of the CDLC

The CDLC borrows its structure from mature software delivery pipelines but adapts each stage to the unique properties of context artifacts—they are natural-language, non-deterministic, and deeply coupled to organizational knowledge. Here is how each stage works in practice.

Stage 1: Generate — Converting Tribal Knowledge into Structured Specifications

The Generate stage tackles what is arguably the hardest problem in enterprise AI: extracting the implicit knowledge locked inside people’s heads and encoding it as machine-readable specifications that agents can execute against. This goes far beyond writing prompts. It means capturing technical context (coding standards, architectural patterns, testing conventions), project context (timelines, priorities, dependencies), and business context (customer expectations, regulatory constraints, domain terminology).

For engineering managers, the practical implication is organizational. Generation requires dedicated workflows where subject-matter experts collaborate with AI engineers to produce structured context artifacts—versioned files that live alongside code, not ephemeral Confluence pages. Think of it as the context equivalent of writing a thorough API specification before building the implementation. The output of this stage is a set of authored, reviewed, and committed context packages that serve as the single source of truth for downstream agents.

Stage 2: Evaluate — Testing Context the Way You Test Code

The Evaluate stage applies test-driven thinking to context. If you wouldn’t ship untested code, why would you ship untested instructions? Evaluation means defining expected agent behaviors through concrete scenarios—input/output pairs, behavioral assertions, and boundary conditions—then running your agents against them before any context change reaches production.

This is where the analogy to software testing becomes concrete. Unit tests verify individual context documents. Integration tests confirm that multiple context artifacts compose correctly without contradiction. Regression tests catch regressions when one team’s context update breaks another team’s agent workflow. The key insight for architects is that evaluation infrastructure should be integrated into the CI/CD pipeline. Context changes trigger automated evaluation suites just like code changes trigger automated test runs. Platforms in this space are already emerging that connect prompt versioning to simulation and observability in unified workflows, enabling teams to measure the behavioral impact of every context change before it ships.

Stage 3: Distribute — Treating Context as Versioned Packages

Distribution is where context management diverges most clearly from traditional prompt engineering. Instead of copy-pasting instructions across projects, the Distribute stage treats context artifacts as versioned, published packages with integrity guarantees—akin to software dependency management. A context package has a version number, a changelog, declared dependencies, and a distribution mechanism that ensures downstream consumers always resolve the correct version.

For organizations running multi-agent architectures, this is transformative. When a legal team updates a compliance policy, the updated context package is published to an internal registry. Agent configurations that depend on that policy automatically pull the new version during their next deployment cycle. Rollback capabilities ensure that if an update introduces unexpected behavior, teams can revert to the last known-good version without scrambling through Slack history to find what changed. The supply-chain security parallel is intentional: just as you verify the provenance of code dependencies, you should verify the provenance and integrity of context dependencies.

Stage 4: Observe — Learning from Production Behavior

The Observe stage closes the feedback loop. Monitoring agent behavior in production reveals the gaps that evaluation couldn’t anticipate—edge cases where context was insufficient, scenarios where instructions conflicted, or situations where reality diverged from assumptions. Observability in this context means logging every LLM call alongside its associated context artifacts, enabling teams to trace specific failures back to specific context versions.

For engineering leaders, the Observe stage is where context management becomes a continuous improvement discipline rather than a one-time authoring exercise. Production telemetry feeds back into the Generate stage, identifying which context artifacts need revision. Evaluation datasets grow as real-world edge cases are captured. Distribution policies are refined as teams learn which context changes carry higher risk. The cycle repeats, and with each iteration, the gap between what agents know and what they need to know narrows.

From Framework to Infrastructure: What This Means for Your Team

Adopting the CDLC is not about buying a tool. It’s an organizational and architectural shift that mirrors the DevOps transformation from a decade ago—the same creator is no coincidence. Gartner has recommended that AI leaders appoint a context engineering lead integrated with AI engineering and governance, and invest in context-aware architectures with organizational accountability for curating and evolving context. AWS prescriptive guidance now frames prompt and agent lifecycle management as a foundational discipline for production-grade generative AI.

Practically, engineering managers and architects should consider five concrete steps to begin operationalizing context management.

- Audit your current context surface. Identify every system prompt, .cursorrules file, retrieval corpus, tool definition, and instruction set in use. Map who owns each artifact and when it was last updated. Most teams find that a significant portion of their context has no owner and no update history.

- Bring context into version control. Context artifacts should live in Git repositories with the same branch, review, and merge workflows as code. Every change should have an author, a reviewer, a commit message, and a timestamp.

- Build evaluation into CI/CD. Start small—even a handful of behavioral assertions per context artifact is better than none. Expand coverage as you learn which context changes carry the most risk.

- Establish a distribution mechanism. Whether it’s an internal package registry, a configuration management system, or a purpose-built context platform, the goal is to eliminate copy-paste distribution and enable versioned, traceable updates.

- Instrument for observability. Log context versions alongside agent interactions so that when something goes wrong in production, you can trace the failure to a specific context artifact at a specific version.

Conclusion

The CDLC represents a maturation point for AI-powered engineering. Just as DevOps transformed software delivery by treating operations as an engineering discipline, the CDLC transforms AI reliability by treating context as an engineering discipline. For the engineering managers and architects navigating 2026’s agentic landscape, the message is clear: your AI agent probably isn’t broken—your context is. The CDLC gives you the framework to fix it with the same rigor, tooling, and operational discipline that you already bring to your code.

The organizations that master this lifecycle earliest will ship more reliable agents, iterate faster on AI-powered features, and avoid the costly production failures that come from treating critical instructions as afterthoughts. The infrastructure patterns are emerging, the tooling is maturing, and the framework is here. The question is no longer whether to manage context like code—it’s how quickly your team can start.