Nearly 78% of employees now use unauthorized AI tools at work. Feeding proprietary code, customer data, and trade secrets into consumer-grade models that their IT teams cannot see or control. At the same time, a parallel movement called “vibe coding” is turning natural-language prompts into production applications, making every knowledge worker a potential software developer. Together, these forces are rewriting the rules of enterprise innovation and creating a governance vacuum that threatens intellectual property, compliance, and security.

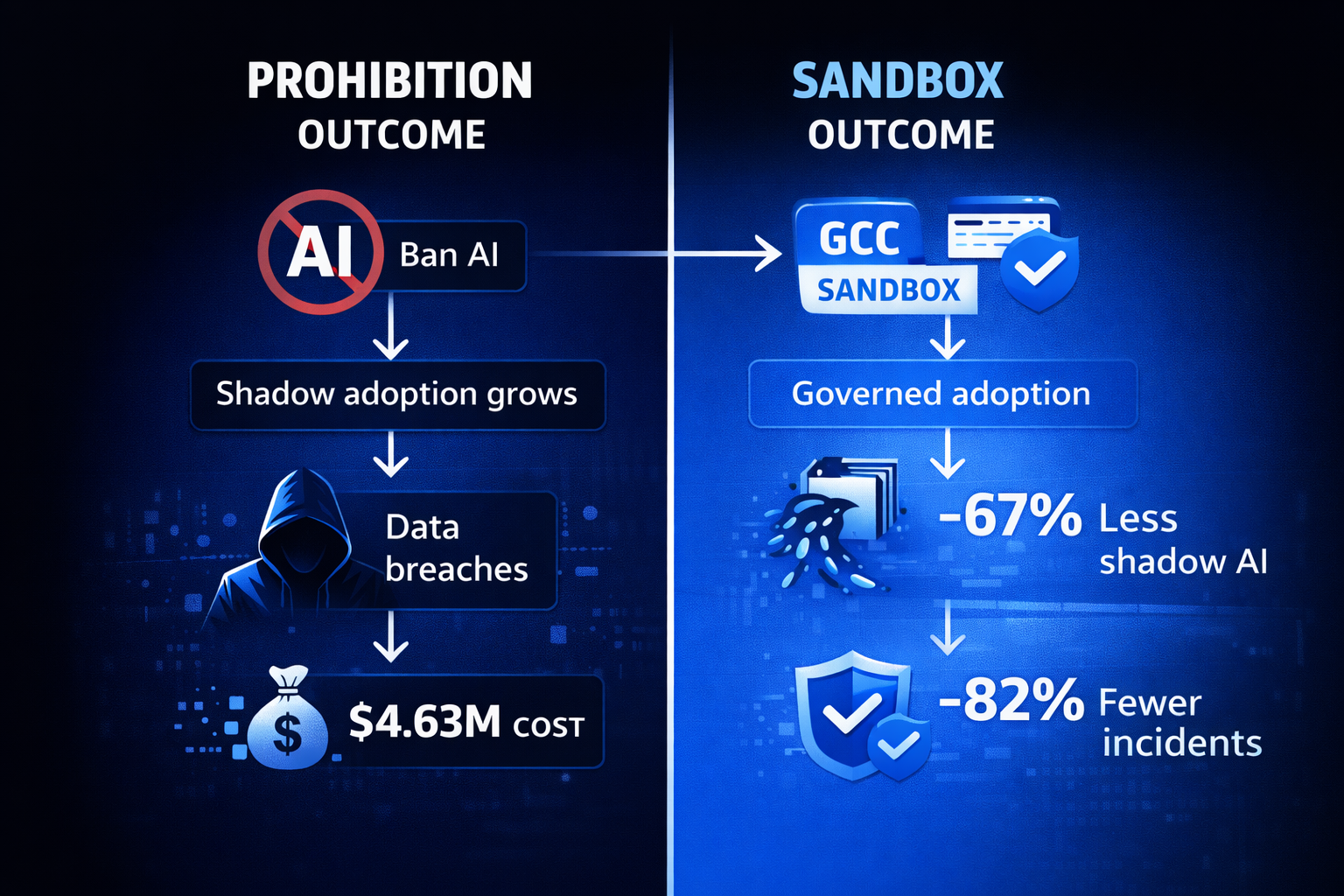

The solution is not to ban AI tools, which only drives adoption further underground. Instead, forward-thinking organizations are turning to Global Capability Centers (GCCs) as governed “sandboxes” where developers and business teams can experiment with AI freely, without exposing the enterprise to uncontrolled data leakage. This article examines how shadow AI and vibe coding converge, why prohibition fails, and how the GCC model offers a scalable path to secure AI-driven innovation.

The Shadow AI Problem: Innovation Without Guardrails

Shadow AI describes the use of unapproved artificial intelligence tools within an organization. These are typically consumer-grade chatbots, code generators, and image models accessed through personal accounts. The behavior mirrors the “shadow IT” wave of the early 2010s, but with far higher stakes: unlike a rogue spreadsheet, a single AI prompt can exfiltrate an entire codebase or customer dataset.

The scale is staggering. Several organizations have already experienced internal data leaks through generative AI. This is the data that employees pasted into prompts rather than traditional exfiltration vectors. Almost 68% of unauthorized enterprise GenAI usage occurs through free-tier personal accounts, completely invisible to security teams.

The financial toll is equally alarming. Shadow AI incidents now account for 20% of all data breaches and carry a cost premium of $4.63 million per breach, compared with $3.96 million for standard incidents. Yet prohibition has proven ineffective. Research shows that 63% of employees consider it acceptable to use AI tools without IT oversight when no company-approved alternative exists.

The Vibe Coding Wave: When Everyone Becomes a Developer

Coined by OpenAI co-founder Andrej Karpathy in February 2025, “vibe coding” describes the practice of building software by describing desired functionality in natural language and letting AI generate the code. The concept resonated so strongly that Collins Dictionary named it a candidate for Word of the Year 2026, and adoption has been explosive.

The productivity gains are real. Industry benchmarks show a 26% improvement in overall development speed, with routine tasks completing up to 51% faster. For standardized operations like API integrations and CRUD scaffolding, time savings can reach 81%. Tools like Cursor, Replit, Bolt, and Lovable have made AI-assisted development accessible to product managers, designers, and business analysts, not just professional engineers.

But vibe coding introduces a unique governance challenge. When non-engineers generate production code through prompts, traditional code review processes break down. The developer may not fully understand the code they have shipped, creating what practitioners call “comprehension debt.” Technical debt compounded by the fact that no human ever deeply understood the implementation. In an enterprise context, this means proprietary business logic may be leaking into AI model training sets with every prompt, and the resulting code may contain vulnerabilities that no one on the team can identify.

Where Shadow AI Meets Vibe Coding: The Convergence Risk

Shadow AI and vibe coding are not separate problems; they are two faces of the same disruption. When developers use unauthorized AI coding assistants, they create shadow AI. When business analysts use consumer AI tools to generate internal applications, they practice unsanctioned vibe coding. The enterprise sees neither the data flowing out nor the code flowing in.

The convergence creates a compounding risk profile. An employee pastes proprietary algorithm logic into ChatGPT to debug it (shadow AI), then uses the AI’s improved version in production without review (vibe coding). The enterprise has simultaneously leaked intellectual property and introduced unvetted code, and has no audit trail for either event.

The GCC Sandbox: A Governed Home for AI Innovation

Global Capability Centers offer a structural answer to the shadow AI dilemma. India alone now hosts nearly 1,800 GCCs employing 1.9 million professionals, generating $64.6 billion in revenue. These centers have evolved from cost-arbitrage back offices into innovation hubs, and their architecture makes them uniquely suited to govern AI experimentation.

How GCCs Function as AI Sandboxes

- Isolated network environments: GCCs operate within dedicated VPCs and data-classified zones, ensuring that AI experiments access only pre-approved datasets. Prompts never reach consumer-grade endpoints; instead, they route through enterprise API agreements with audit logging enabled.

- Approved tool catalogs: Rather than banning AI, GCCs curate sanctioned toolchains—enterprise versions of Copilot, Amazon CodeWhisperer, or self-hosted open-source models—so developers get the productivity benefits without the data-leakage risk.

- Data Loss Prevention (DLP) at the prompt layer: Modern GCC security stacks intercept AI prompts before they leave the network, redacting PII, proprietary code snippets, and regulated data automatically.

- Code review gates for AI-generated output: Vibe-coded applications pass through mandatory static analysis, security scanning, and human review checkpoints before they touch any staging or production environment.

83% of GCCs are now scaling GenAI beyond pilots into production workflows, according to EY’s analysis of India’s GCC ecosystem. This signals a maturation from experimental “innovation labs” to enterprise-grade AI platforms with proper governance guardrails baked in.

Building the Sandbox: A Practical Framework

Organizations looking to channel shadow AI and vibe coding into their GCC operations should follow a phased approach that balances speed-to-value with enterprise risk management.

The evidence supports this approach. Organizations that implement clear AI policies and approved alternatives see 67% less shadow AI usage. One documented case reduced unauthorized tool sprawl from 19 tools to 3 within four months, with security incidents declining by 82%.

The Competitive Imperative: Act Now or Fall Behind

The GCC model’s appeal extends beyond risk mitigation. With India’s GCC workforce projected to reach 2.5 million by 2030 and revenues approaching $100 billion, these centers represent the largest concentration of technical talent operating under enterprise governance frameworks. Organizations that establish AI sandboxes within their GCCs gain a dual advantage: they capture the productivity gains of AI-assisted development while maintaining the compliance posture that regulators and customers increasingly demand.

The alternative is ignoring shadow AI and hoping vibe coding stays out of production. Gartner projects that 40% of enterprises will suffer a data breach attributable to shadow AI by 2030. The organizations that thrive will be those that stopped trying to outlaw innovation and instead gave it a governed home.

Conclusion

Shadow AI and vibe coding are not threats to be eliminated. They are signals of genuine demand for AI-powered productivity. The enterprises that succeed will be those that redirect this energy into governed environments, and GCCs provide the ideal infrastructure: isolated networks, curated toolchains, prompt-level DLP, and mandatory code review, all backed by deep technical talent.

Start by auditing your current shadow AI footprint this quarter, then provision sanctioned AI tools within your GCC environment. For organizations seeking expert guidance on building secure AI-first GCC operations, Crewscale, by partnering with its sister company Beanbag AI, specializes in helping teams design and scale governed innovation frameworks that turn developer enthusiasm into enterprise advantage.