Vibe coding has fundamentally reshaped how software gets built. Coined by Andrej Karpathy in early 2025, the term describes a development paradigm where engineers converse with AI assistants in natural language and let the model produce working code. By the end of 2026, AI agents will write ten times more code than humans. Executives at Microsoft and Google have disclosed that roughly 30% of their production codebases are now machine-generated. The productivity gains are extraordinary, but they come tethered to a security crisis that most enterprises are only beginning to acknowledge.

A landmark Veracode 2025 GenAI Code Security Report analyzed over 100 large language models across 80 coding tasks and found that 45% of all AI-generated code introduces exploitable vulnerabilities, many of them critical flaws from the OWASP Top 10. Cross-site scripting errors appeared in 86% of test cases, SQL injection persisted in 20%, and 14% of cryptographic implementations relied on weak or broken algorithms. These are not edge cases. They are the predictable output of models trained on the open-source commons, where insecure patterns are often the most prevalent patterns.

This article provides a practical playbook for CISOs, engineering leaders, and security architects who need to govern AI-generated code at enterprise scale. It covers the threat landscape, introduces proven governance frameworks, details how to build automated review pipelines, and maps the compliance landscape so your organization can capture the speed of vibe coding without inheriting its risk.

Understanding the Vibe Coding Threat Landscape

The security risks of vibe coding are structural, not incidental. Large language models learn by pattern matching against massive code repositories, and if an insecure pattern like a string-concatenated SQL query appears frequently in training data, the model reproduces it confidently. Georgetown University’s Center for Security and Emerging Technology identifies three broad risk categories: models generating insecure code, models themselves being vulnerable to adversarial manipulation, and downstream feedback loops where insecure AI output poisons future training data.

Compounding the technical risk is a behavioral one. Developers feel less responsible for AI-generated code and spend less time reviewing it. The false confidence problem means that code produced by an AI assistant often receives less scrutiny than code written by a junior engineer, even though it may be equally or more likely to contain critical flaws.

Shadow AI and the Invisible Attack Surface

Vibe coding tools have democratized software development, empowering non-technical employees to build internal applications that touch production data. This creates a massive shadow AI problem where applications materialize outside the purview of security teams. These citizen-developed apps typically lack environment separation, role-based access controls, audit logging, and proper secrets management. Security teams cannot defend what they cannot see, and the invisible attack surface is growing faster than most enterprises realize.

Real-World Breach Scenarios

The consequences of ungoverned vibe coding are not theoretical. Security firm Wiz documented a case where a misconfigured Supabase database, built rapidly via AI-assisted development, exposed 1.5 million API keys and 35,000 user email addresses. In another incident, a startup called Enrichlead used the Cursor AI editor to write its entire codebase. The AI placed all security logic on the client side, and within 72 hours, users discovered they could bypass the paywall by changing a single value in the browser console. The project shut down entirely. Vibe coding agents routinely neglect authentication, rate limiting, and input validation when left unsupervised.

Enterprise Governance Frameworks for AI-Generated Code

Governing vibe coding is not about banning AI tools. It is about embedding security into the same workflow that makes these tools so productive. Several governance models have emerged in 2025 and 2026 that provide structured approaches to this challenge.

The SHIELD Framework from Palo Alto Networks

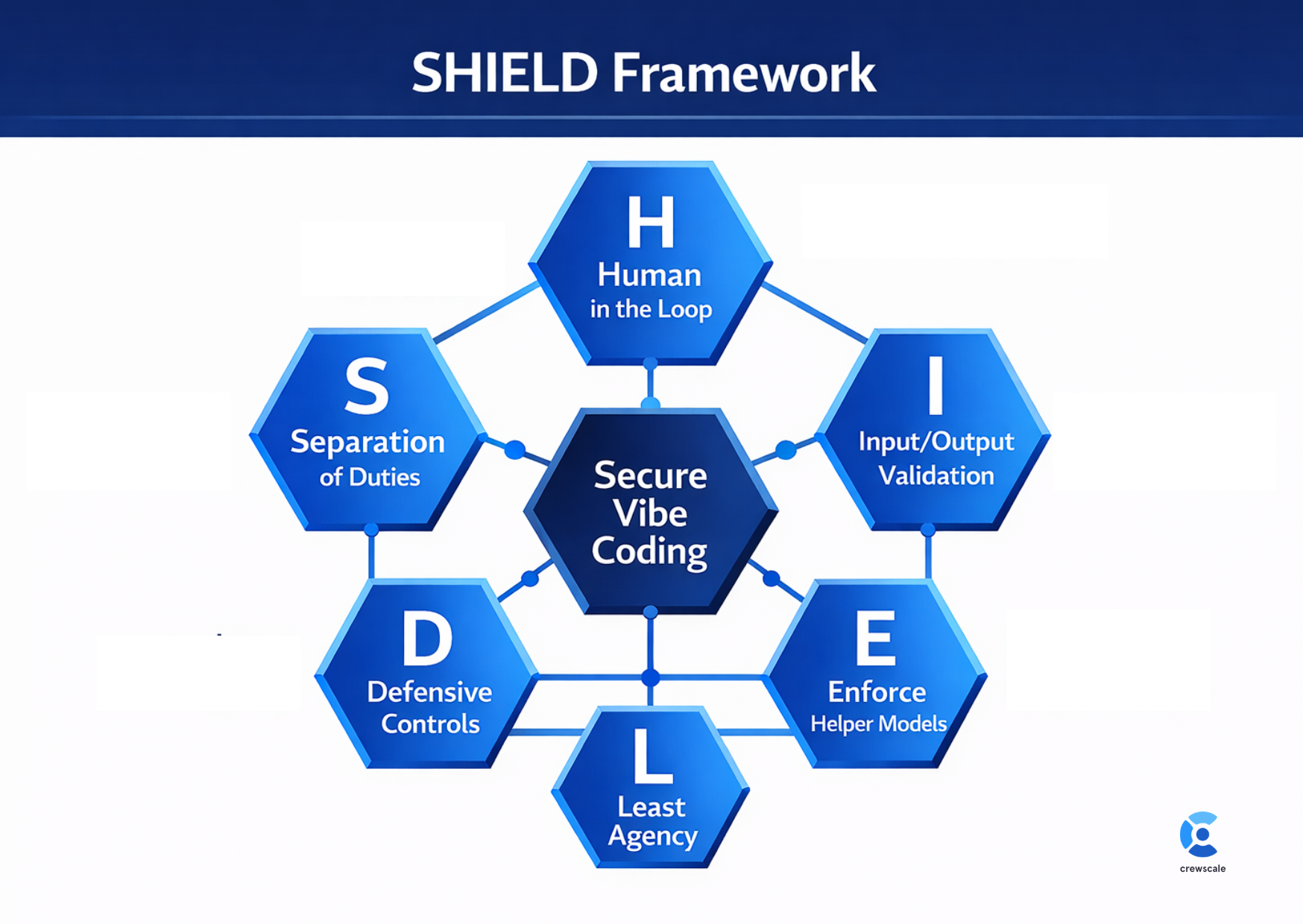

In January 2026, Unit 42 introduced SHIELD, a six-pillar governance framework purpose-built for vibe coding environments. Each pillar addresses a distinct failure mode observed in enterprise deployments.

SHIELD is particularly effective because it addresses the root causes identified by Unit 42: models prioritize function over security, lack situational awareness between production and development contexts, hallucinate nonexistent libraries that create phantom supply chain risks, and serve citizen developers who lack secure coding literacy.

NIST SSDF and SLSA: Compliance-Ready Foundations

For enterprises that need to map vibe coding controls to regulatory requirements, two complementary frameworks provide the foundation. NIST SP 800-218A extends the Secure Software Development Framework with practices specific to generative AI and foundation models, covering the organizational, technical, and procedural safeguards required throughout the AI-augmented software development lifecycle. Meanwhile, Google’s SLSA framework provides the implementation mechanics, focusing on securing source code, build processes, and deployment through provenance attestation and artifact signing. Where NIST answers the question of what to do, SLSA answers how to do it.

Building Shared Accountability Structures

Governance frameworks only work when accountability is distributed across the organization. ArmorCode’s research on AI code governance maturity gaps recommends building formal accountability structures that unite application security, DevOps, and compliance teams. This includes shared KPIs, audit trails for how AI-generated code is reviewed and approved, and regular cross-team assessments to track policy adherence. The goal is to make security a shared operational responsibility rather than a checkpoint owned by a single team.

Building Automated Security Review Pipelines

Manual code review cannot scale to the volume of code produced by AI assistants. The solution is to automate security validation within the CI/CD pipeline so that every pull request, whether human-authored or AI-generated, passes through the same rigorous checks.

The Four-Layer Security Pipeline

- Layer 1 – Static Analysis (SAST): Run static application security testing on every pull request. Configure the pipeline to block merging when high-severity or critical findings are detected. SAST catches insecure patterns, hardcoded credentials, and OWASP Top 10 violations before code leaves the development environment.

- Layer 2 – Software Composition Analysis (SCA): Scan all dependencies, packages, and third-party components for known vulnerabilities. This is especially critical for vibe-coded applications because AI models frequently hallucinate package names or recommend outdated libraries with known CVEs. Generate a Software Bill of Materials (SBOM) for every build.

- Layer 3 – Secrets Detection: Scan for API keys, tokens, database credentials, and other secrets that AI assistants routinely embed in generated code. Block any commit that contains detected secrets and rotate compromised credentials immediately.

- Layer 4 – Dynamic Testing (DAST): Run dynamic application security testing against staging environments to catch runtime vulnerabilities that static analysis cannot detect, including authentication bypasses, injection flaws, and broken access controls.

Multi-Agent Security Review Workflows

A powerful emerging pattern is the multi-agent review workflow, where dedicated AI agents serve as security reviewers within the development process. In this model, Agent 1 (the Builder) generates feature code, Agent 2 (the Reviewer) analyzes the output for vulnerabilities and compliance with project security standards, and Agent 3 (the Tester) generates security-focused test cases including injection attempts, authentication bypasses, and privilege escalation scenarios. This creates a system of checks and balances where no single agent both writes and approves code.

Continuous Feedback Loops

The most mature vibe coding security programs build continuous feedback loops between their AI agents and their security pipelines. When the pipeline rejects a pattern, that rejection feeds back into the agent’s context so it learns not to reproduce it. Over time, the pipeline adapts to scan for patterns the agents tend to produce, and the agents learn to avoid patterns the pipeline would reject. The system becomes more secure with every iteration, creating a project-specific security knowledge base that compounds in value.

Compliance Integration Strategies

Regulatory pressure on AI-generated code is intensifying across jurisdictions. Enterprises must map their vibe coding governance controls to existing and emerging compliance requirements to avoid legal exposure and demonstrate due diligence.

Regulatory Landscape

Beyond Vibe Compliance

Enterprises must avoid what researchers call “vibe compliance”: the practice of using AI to generate compliance documentation without genuine risk management behind it. Just as vibe coders outsource coding to AI, some organizations are outsourcing compliance paperwork to the same tools. Automation cannot replace human oversight, expert review, and rigorous testing. Maintaining comprehensive audit trails that document AI usage, the prompts given, the code generated, and the security reviews performed is imperative for both internal accountability and regulatory defense.

A Practical Implementation Roadmap

For enterprises beginning their vibe coding governance journey, the following phased approach provides a structured path from assessment to maturity.

Phase 1: Discovery and Risk Assessment (Weeks 1–4)

- Inventory all AI coding tools currently in use, including shadow AI deployments.

- Perform a formal risk assessment by mapping each tool’s capabilities to your threat model.

- Establish an approved list of AI coding assistants and define usage policies.

- Prohibit pasting sensitive IP, PII, or credentials into public AI models.

Phase 2: Pipeline Integration (Weeks 5–10)

- Embed SAST, SCA, secrets scanning, and SBOM generation into CI/CD pipelines.

- Configure merge-blocking rules for high-severity and critical findings.

- Deploy security-focused helper agents for automated pre-merge validation.

- Implement AI code classification to tag and track machine-generated code.

Phase 3: Governance Maturity (Weeks 11–16)

- Adopt the SHIELD framework or equivalent governance model across all development teams.

- Map controls to NIST SSDF, SLSA, and applicable regulatory requirements.

- Establish shared KPIs and audit trails across AppSec, DevOps, and compliance functions.

- Launch developer training and certification on secure AI-assisted development.

Phase 4: Continuous Improvement (Ongoing)

- Build feedback loops between security pipelines and AI agents.

- Conduct regular cross-team assessments and governance reviews.

- Re-evaluate AI-generated code sections when new vulnerability patterns emerge.

- Track governance maturity metrics and report to executive leadership.

Conclusion

The vibe coding revolution is not slowing down. The enterprises that thrive will be those that treat AI-generated code with the same governance rigor they apply to any other critical business asset. By adopting structured frameworks like SHIELD, building automated multi-layered security pipelines, and mapping controls to regulatory requirements, organizations can capture the transformative speed of AI-assisted development without inheriting its risk.

The playbook is clear: discover your AI coding exposure, automate security validation at every stage of the pipeline, distribute accountability across teams, and build feedback loops that make the system smarter over time. Vibe coding is here to stay. The only question is whether your governance will keep pace.